Curve fitting

Curve fitting is the process of defining/determining (fit) a function (curve) that best approximates the relationship between dependent and independent variables.

Underfitting

Visualize the fit of the model on the test data and bias variance tradeoff.

If a model has high bias and low variance, the model undefits the data

Error of the model

There are two types of bad fits:

- Underfitting, when models are too basic

- Overfitting, when models are too complex, which may lead to incorrect predictions for values outside of the training data.

To measure these errors, we use bias and variance.

If a model has high bias and low variance, the model undefits the data. If the opposite occurrs, it overfits.

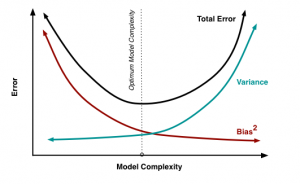

Bias Variance Tradeoff

Bias is the error between average model prediction and the ground truth

Variance is the error between the average model prediction and the model prediction

Bias and variance have an inverse relationship.

Regression Models

Mean squared error uses the mean of a collection of differences between the prediction and the truth squared as a measurement for fit.

It can be used to measure the fit of the model on the training and test data. It is not a normalized value, so we use it in conjunction with another value to get the error in terms of percentage.

The coefficient of determination (R^2) represents the strength of the relationship between input and output.

where is the average.

R^2 is normalized.

K-fold Cross Validation

K-fold cross validation is a technique used to compare models and prevent overfitting. It partitions randomized data into k folds (usually 10) of equal size, and use one unique fold for testing each time, calculating the above values.

This avoids the problem of model only targeting training data

![{\displaystyle {\text{Bias}}^{2}=E[(E[g(x)]-f(x))^{2}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/aad3e18faea0b34d5f444ce94b15f940671169a4)

![{\displaystyle {\text{Variance}}=E[(E[g(x)]-g(x))^{2}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/03efe12a35ea93ecf31c4486b4af9894e2b0a5e7)